This tutorial is an introduction to the FIWARE Cosmos Orion Spark Connector, which enables easier Big Data analysis over context, integrated with one of the most popular BigData platforms: Apache Spark. Apache Spark is a framework and distributed processing engine for stateful computations over unbounded and bounded data streams. Spark has been designed to run in all common cluster environments, perform computations at in-memory speed and at any scale.

The tutorial uses cUrl commands throughout, but is also available as Postman documentation:

- このチュートリアルは日本語でもご覧いただけます。

Details

"You have to find what sparks a light in you so that you in your own way can illuminate the world."

— Oprah Winfrey

Smart solutions based on FIWARE are architecturally designed around microservices. They are therefore are designed to scale-up from simple applications (such as the Supermarket tutorial) through to city-wide installations base on a large array of IoT sensors and other context data providers.

The massive amount of data involved eventually becomes too much for a single machine to analyse, process and store, and therefore the work must be delegated to additional distributed services. These distributed systems form the basis of so-called Big Data Analysis. The distribution of tasks allows developers to be able to extract insights from huge data sets which would be too complex to be dealt with using traditional methods. and uncover hidden patterns and correlations.

As we have seen, context data is core to any Smart Solution, and the Context Broker is able to monitor changes of state and raise subscription events as the context changes. For smaller installations, each subscription event can be processed one-by-one by a single receiving endpoint, however as the system grows, another technique will be required to avoid overwhelming the listener, potentially blocking resources and missing updates.

Apache Spark is an open-source distributed general-purpose cluster-computing framework. It provides an interface for programming entire clusters with implicit data parallelism and fault tolerance. The Cosmos Spark connector allows developers write custom business logic to listen for context data subscription events and then process the flow of the context data. Spark is able to delegate these actions to other workers where they will be acted upon either in sequentially or in parallel as required. The data flow processing itself can be arbitrarily complex.

Obviously, in reality, our existing Supermarket scenario is far too small to require the use of a Big Data solution, but will serve as a basis for demonstrating the type of real-time processing which may be required in a larger solution which is processing a continuous stream of context-data events.

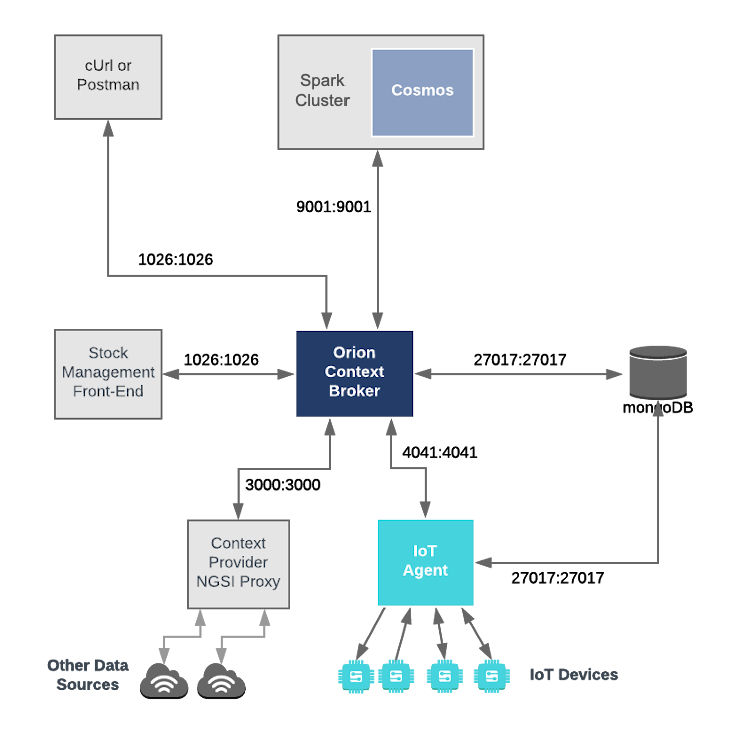

This application builds on the components and dummy IoT devices created in previous tutorials. It will make use of three FIWARE components - the Orion Context Broker, the IoT Agent for Ultralight 2.0, and the Cosmos Orion Spark Connector for connecting Orion to an Apache Spark cluster. The Spark cluster itself will consist of a single Cluster Manager master to coordinate execution and some Worker Nodes worker to execute the tasks.

Both the Orion Context Broker and the IoT Agent rely on open source MongoDB technology to keep persistence of the information they hold. We will also be using the dummy IoT devices created in the previous tutorial.

Therefore the overall architecture will consist of the following elements:

- Two FIWARE Generic Enablers as independent microservices:

- The FIWARE Orion Context Broker which will receive requests using NGSI

- The FIWARE IoT Agent for Ultralight 2.0 which will receive northbound measurements from the dummy IoT devices in Ultralight 2.0 format and convert them to NGSI requests for the context broker to alter the state of the context entities

- An Apache Spark cluster consisting of a single

ClusterManager and Worker Nodes

- The FIWARE Cosmos Orion Spark Connector will be deployed as part of the dataflow which will subscribe to context changes and make operations on them in real-time

- One MongoDB database :

- Used by the Orion Context Broker to hold context data information such as data entities, subscriptions and registrations

- Used by the IoT Agent to hold device information such as device URLs and Keys

- Three Context Providers:

- A webserver acting as set of dummy IoT devices using the Ultralight 2.0 protocol running over HTTP.

- The Stock Management Frontend is not used in this tutorial. It does the following:

- Display store information and allow users to interact with the dummy IoT devices

- Show which products can be bought at each store

- Allow users to "buy" products and reduce the stock count.

- The Context Provider NGSI proxy is not used in this tutorial. It does the following:

The overall architecture can be seen below:

spark-master:

image: bde2020/spark-master:2.4.5-hadoop2.7

container_name: spark-master

expose:

- "8080"

- "9001"

ports:

- "8080:8080"

- "7077:7077"

- "9001:9001"

environment:

- INIT_DAEMON_STEP=setup_spark

- "constraint:node==spark-master"spark-worker-1:

image: bde2020/spark-worker:2.4.5-hadoop2.7

container_name: spark-worker-1

depends_on:

- spark-master

ports:

- "8081:8081"

environment:

- "SPARK_MASTER=spark://spark-master:7077"

- "constraint:node==spark-master"The spark-master container is listening on three ports:

- Port

8080is exposed so we can see the web frontend of the Apache Spark-Master Dashboard. - Port

7070is used for internal communications.

The spark-worker-1 container is listening on one port:

- Port

9001is exposed so that the installation can receive context data subscriptions. - Ports

8081is exposed so we can see the web frontend of the Apache Spark-Worker-1 Dashboard.

To keep things simple, all components will be run using Docker. Docker is a container technology which allows to different components isolated into their respective environments.

- To install Docker on Windows follow the instructions here

- To install Docker on Mac follow the instructions here

- To install Docker on Linux follow the instructions here

Docker Compose is a tool for defining and running multi-container Docker applications. A series of YAML files are used to configure the required services for the application. This means all container services can be brought up in a single command. Docker Compose is installed by default as part of Docker for Windows and Docker for Mac, however Linux users will need to follow the instructions found here

You can check your current Docker and Docker Compose versions using the following commands:

docker-compose -v

docker versionPlease ensure that you are using Docker version 18.03 or higher and Docker Compose 1.21 or higher and upgrade if necessary.

Apache Maven is a software project management and comprehension tool. Based on the concept of a project object model (POM), Maven can manage a project's build, reporting and documentation from a central piece of information. We will use Maven to define and download our dependencies and to build and package our code into a JAR file.

We will start up our services using a simple Bash script. Windows users should download cygwin to provide a command-line functionality similar to a Linux distribution on Windows.

Before you start, you should ensure that you have obtained or built the necessary Docker images locally. Please clone the repository and create the necessary images by running the commands shown below. Note that you might need to run some of the commands as a privileged user:

git clone https://github.com/ging/fiware-cosmos-orion-spark-connector-tutorial.git

cd fiware-cosmos-orion-spark-connector-tutorial

./services createThis command will also import seed data from the previous tutorials and provision the dummy IoT sensors on startup.

To start the system, run the following command:

./services startℹ️ Note: If you want to clean up and start over again you can do so with the following command:

./services stop

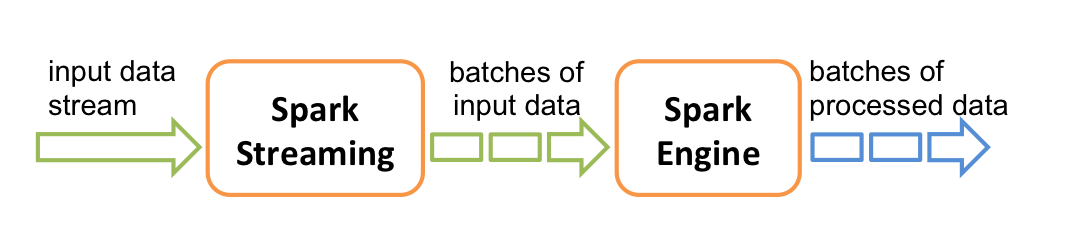

According to the Apache Spark documentation, Spark Streaming is an extension of the core Spark API that enables scalable, high-throughput, fault-tolerant stream processing of live data streams. Data can be ingested from many sources like Kafka, Flume, Kinesis, or TCP sockets, and can be processed using complex algorithms expressed with high-level functions like map, reduce, join and window. Finally, processed data can be pushed out to filesystems, databases, and live dashboards. In fact, you can apply Spark’s machine learning and graph processing algorithms on data streams.

Internally, it works as follows. Spark Streaming receives live input data streams and divides the data into batches, which are then processed by the Spark engine to generate the final stream of results in batches.

This means that to create a streaming data flow we must supply the following:

- A mechanism for reading Context data as a Source Operator

- Business logic to define the transform operations

- A mechanism for pushing Context data back to the context broker as a Sink Operator

The Cosmos Spark connector - orion.spark.connector-1.2.2.jar offers both Source and Sink operators. It

therefore only remains to write the necessary Scala code to connect the streaming dataflow pipeline operations together.

The processing code can be complied into a JAR file which can be uploaded to the spark cluster. Two examples will be

detailed below, all the source code for this tutorial can be found within the

cosmos-examples

directory.

Further Spark processing examples can be found on Spark Connector Examples.

An existing pom.xml file has been created which holds the necessary prerequisites to build the examples JAR file

In order to use the Orion Spark Connector we first need to manually install the connector JAR as an artifact using Maven:

cd cosmos-examples

curl -LO https://github.com/ging/fiware-cosmos-orion-spark-connector/releases/download/FIWARE_7.9.1/orion.spark.connector-1.2.2.jar

mvn install:install-file \

-Dfile=./orion.spark.connector-1.2.2.jar \

-DgroupId=org.fiware.cosmos \

-DartifactId=orion.spark.connector \

-Dversion=1.2.2 \

-Dpackaging=jarThereafter the source code can be compiled by running the mvn package command within the same directory

(cosmos-examples):

mvn packageA new JAR file called cosmos-examples-1.2.2.jar will be created within the cosmos-examples/target directory.

For the purpose of this tutorial, we must be monitoring a system in which the context is periodically being updated. The

dummy IoT Sensors can be used to do this. Open the device monitor page at http://localhost:3000/device/monitor and

unlock a Smart Door and switch on a Smart Lamp. This can be done by selecting an appropriate the command from

the drop down list and pressing the send button. The stream of measurements coming from the devices can then be seen

on the same page:

The first example makes use of the OrionReceiver operator in order to receive notifications from the Orion Context

Broker. Specifically, the example counts the number notifications that each type of device sends in one minute. You can

find the source code of the example in

org/fiware/cosmos/tutorial/Logger.scala

Restart the containers if necessary, then access the worker container:

docker exec -it spark-worker-1 bin/bashAnd run the following command to run the generated JAR package in the Spark cluster:

/spark/bin/spark-submit \

--class org.fiware.cosmos.tutorial.Logger \

--master spark://spark-master:7077 \

--deploy-mode client /home/cosmos-examples/target/cosmos-examples-1.2.2.jar \

--conf "spark.driver.extraJavaOptions=-Dlog4jspark.root.logger=WARN,console"Once a dynamic context system is up and running (we have deployed the Logger job in the Spark cluster), we need to

inform Spark of changes in context.

This is done by making a POST request to the /v2/subscription endpoint of the Orion Context Broker.

-

The

fiware-serviceandfiware-servicepathheaders are used to filter the subscription to only listen to measurements from the attached IoT Sensors, since they had been provisioned using these settings -

The notification

urlmust match the one our Spark program is listening to. -

The

throttlingvalue defines the rate that changes are sampled.

Open another terminal and run the following command:

curl -iX POST \

'http://localhost:1026/v2/subscriptions' \

-H 'Content-Type: application/json' \

-H 'fiware-service: openiot' \

-H 'fiware-servicepath: /' \

-d '{

"description": "Notify Spark of all context changes",

"subject": {

"entities": [

{

"idPattern": ".*"

}

]

},

"notification": {

"http": {

"url": "http://spark-worker-1:9001"

}

}

}'The response will be 201 - Created

If a subscription has been created, we can check to see if it is firing by making a GET request to the

/v2/subscriptions endpoint.

curl -X GET \

'http://localhost:1026/v2/subscriptions/' \

-H 'fiware-service: openiot' \

-H 'fiware-servicepath: /'[

{

"id": "5d76059d14eda92b0686f255",

"description": "Notify Spark of all context changes",

"status": "active",

"subject": {

"entities": [

{

"idPattern": ".*"

}

],

"condition": {

"attrs": []

}

},

"notification": {

"timesSent": 362,

"lastNotification": "2019-09-09T09:36:33.00Z",

"attrs": [],

"attrsFormat": "normalized",

"http": {

"url": "http://spark-worker-1:9001"

},

"lastSuccess": "2019-09-09T09:36:33.00Z",

"lastSuccessCode": 200

}

}

]Within the notification section of the response, you can see several additional attributes which describe the health

of the subscription

If the criteria of the subscription have been met, timesSent should be greater than 0. A zero value would indicate

that the subject of the subscription is incorrect or the subscription has created with the wrong fiware-service-path

or fiware-service header

The lastNotification should be a recent timestamp - if this is not the case, then the devices are not regularly

sending data. Remember to unlock the Smart Door and switch on the Smart Lamp

The lastSuccess should match the lastNotification date - if this is not the case then Cosmos is not receiving

the subscription properly. Check that the hostname and port are correct.

Finally, check that the status of the subscription is active - an expired subscription will not fire.

Leave the subscription running for one minute. Then, the output on the console on which you ran the Spark job will be like the following:

Sensor(Bell,3)

Sensor(Door,4)

Sensor(Lamp,7)

Sensor(Motion,6)

package org.fiware.cosmos.tutorial

import org.apache.spark.SparkConf

import org.apache.spark.streaming.{Seconds, StreamingContext}

import org.fiware.cosmos.orion.spark.connector.OrionReceiver

object Logger{

def main(args: Array[String]): Unit = {

val conf = new SparkConf().setAppName("Logger")

val ssc = new StreamingContext(conf, Seconds(60))

// Create Orion Receiver. Receive notifications on port 9001

val eventStream = ssc.receiverStream(new OrionReceiver(9001))

// Process event stream

val processedDataStream= eventStream

.flatMap(event => event.entities)

.map(ent => {

new Sensor(ent.`type`)

})

.countByValue()

.window(Seconds(60))

processedDataStream.print()

ssc.start()

ssc.awaitTermination()

}

case class Sensor(device: String)

}The first lines of the program are aimed at importing the necessary dependencies, including the connector. The next step

is to create an instance of the OrionReceiver using the class provided by the connector and to add it to the

environment provided by Spark.

The OrionReceiver constructor accepts a port number (9001) as a parameter. This port is used to listen to the

subscription notifications coming from Orion and converted to a DataStream of NgsiEvent objects. The definition of

these objects can be found within the

Orion-Spark Connector documentation.

The stream processing consists of five separate steps. The first step (flatMap()) is performed in order to put

together the entity objects of all the NGSI Events received in a period of time. Thereafter the code iterates over them

(with the map() operation) and extracts the desired attributes. In this case, we are interested in the sensor type

(Door, Motion, Bell or Lamp).

Within each iteration, we create a custom object with the property we need: the sensor type. For this purpose, we can

define a case class as shown:

case class Sensor(device: String)Thereafter can count the created objects by the type of device (countByValue()) and perform operations such as

window() on them.

After the processing, the results are output to the console:

processedDataStream.print()The same example is provided for data in the NGSI-LD format (LoggerLD.scala). This example makes use of the

NGSILDReceiver provided by the Orion Spark Connector in order to receive messages in the NGSI-LD format. The only part

of the code that changes is the declaration of the receiver:

...

import org.fiware.cosmos.orion.spark.connector.NGSILDReceiver

...

val eventStream = env.addSource(new NGSILDReceiver(9001))

...In order to run this job, you need to user the spark-submit command again, specifying the LoggerLD class instead of

Logger:

/spark/bin/spark-submit \

--class org.fiware.cosmos.tutorial.LoggerLD \

--master spark://spark-master:7077 \

--deploy-mode client /home/cosmos-examples/target/cosmos-examples-1.2.2.jar \

--conf "spark.driver.extraJavaOptions=-Dlog4jspark.root.logger=WARN,console"The second example switches on a lamp when its motion sensor detects movement.

The dataflow stream uses the OrionReceiver operator in order to receive notifications and filters the input to only

respond to motion senseors and then uses the OrionSink to push processed context back to the Context Broker. You can

find the source code of the example in

org/fiware/cosmos/tutorial/Feedback.scala

/spark/bin/spark-submit \

--class org.fiware.cosmos.tutorial.Feedback \

--master spark://spark-master:7077 \

--deploy-mode client /home/cosmos-examples/target/cosmos-examples-1.2.2.jar \

--conf "spark.driver.extraJavaOptions=-Dlog4jspark.root.logger=WARN,console"If the previous example has not been run, a new subscription will need to be set up. A narrower subscription can be set up to only trigger a notification when a motion sensor detects movement.

Note: If the previous subscription already exists, this step creating a second narrower Motion-only subscription is unnecessary. There is a filter within the business logic of the scala task itself.

curl -iX POST \

'http://localhost:1026/v2/subscriptions' \

-H 'Content-Type: application/json' \

-H 'fiware-service: openiot' \

-H 'fiware-servicepath: /' \

-d '{

"description": "Notify Spark of all context changes",

"subject": {

"entities": [

{

"idPattern": "Motion.*"

}

]

},

"notification": {

"http": {

"url": "http://spark-worker-1:9001"

}

}

}'Go to http://localhost:3000/device/monitor

Within any Store, unlock the door and wait. Once the door opens and the Motion sensor is triggered, the lamp will switch on directly

package org.fiware.cosmos.tutorial

import org.apache.spark.SparkConf

import org.apache.spark.streaming.{Seconds, StreamingContext}

import org.fiware.cosmos.orion.spark.connector._

object Feedback {

final val CONTENT_TYPE = ContentType.JSON

final val METHOD = HTTPMethod.PATCH

final val CONTENT = "{\n \"on\": {\n \"type\" : \"command\",\n \"value\" : \"\"\n }\n}"

final val HEADERS = Map("fiware-service" -> "openiot","fiware-servicepath" -> "/","Accept" -> "*/*")

def main(args: Array[String]): Unit = {

val conf = new SparkConf().setAppName("Feedback")

val ssc = new StreamingContext(conf, Seconds(10))

// Create Orion Receiver. Receive notifications on port 9001

val eventStream = ssc.receiverStream(new OrionReceiver(9001))

// Process event stream

val processedDataStream = eventStream

.flatMap(event => event.entities)

.filter(entity=>(entity.attrs("count").value == "1"))

.map(entity=> new Sensor(entity.id))

.window(Seconds(10))

val sinkStream= processedDataStream.map(sensor => {

val url="http://localhost:1026/v2/entities/Lamp:"+sensor.id.takeRight(3)+"/attrs"

OrionSinkObject(CONTENT,url,CONTENT_TYPE,METHOD,HEADERS)

})

// Add Orion Sink

OrionSink.addSink( sinkStream )

// print the results with a single thread, rather than in parallel

processedDataStream.print()

ssc.start()

ssc.awaitTermination()

}

case class Sensor(id: String)

}As you can see, it is similar to the previous example. The main difference is that it writes the processed data back in

the Context Broker through the OrionSink.

The arguments of the OrionSinkObject are:

- Message:

"{\n \"on\": {\n \"type\" : \"command\",\n \"value\" : \"\"\n }\n}". We send 'on' command - URL:

"http://localhost:1026/v2/entities/Lamp:"+node.id.takeRight(3)+"/attrs". TakeRight(3) gets the number of the room, for example '001') - Content Type:

ContentType.Plain. - HTTP Method:

HTTPMethod.POST. - Headers:

Map("fiware-service" -> "openiot","fiware-servicepath" -> "/","Accept" -> "*/*"). Optional parameter. We add the headers we need in the HTTP Request.

If you would rather use Flink as your data processing engine, we have this tutorial available for Flink as well

The operations performed on data in this tutorial were very simple. If you would like to know how to set up a scenario for performing real-time predictions using Machine Learning check out the demo presented at the FIWARE Global Summit in Berlin (2019).

If you want to learn how to add more complexity to your application by adding advanced features, you can find out by reading the other tutorials in this series

MIT © 2020 FIWARE Foundation e.V.